In February 2019, the San-Francisco based research lab OpenAI announced that its artificial intelligence system could write convincing passages of English. If you feed the beginning of a paragraph or sentence into GPT-2 (as is its name), it will continue the core though of it for as long as an essay with almost human-like coherence.

The lab currently explores what will happen if the same algorithm was instead fed part of a picture. An honorable mention for best paper at this week’s International Conference on Machine learning was given to the result. Thus, this opened a new avenue for image generation, ripe with consequences and opportunity.

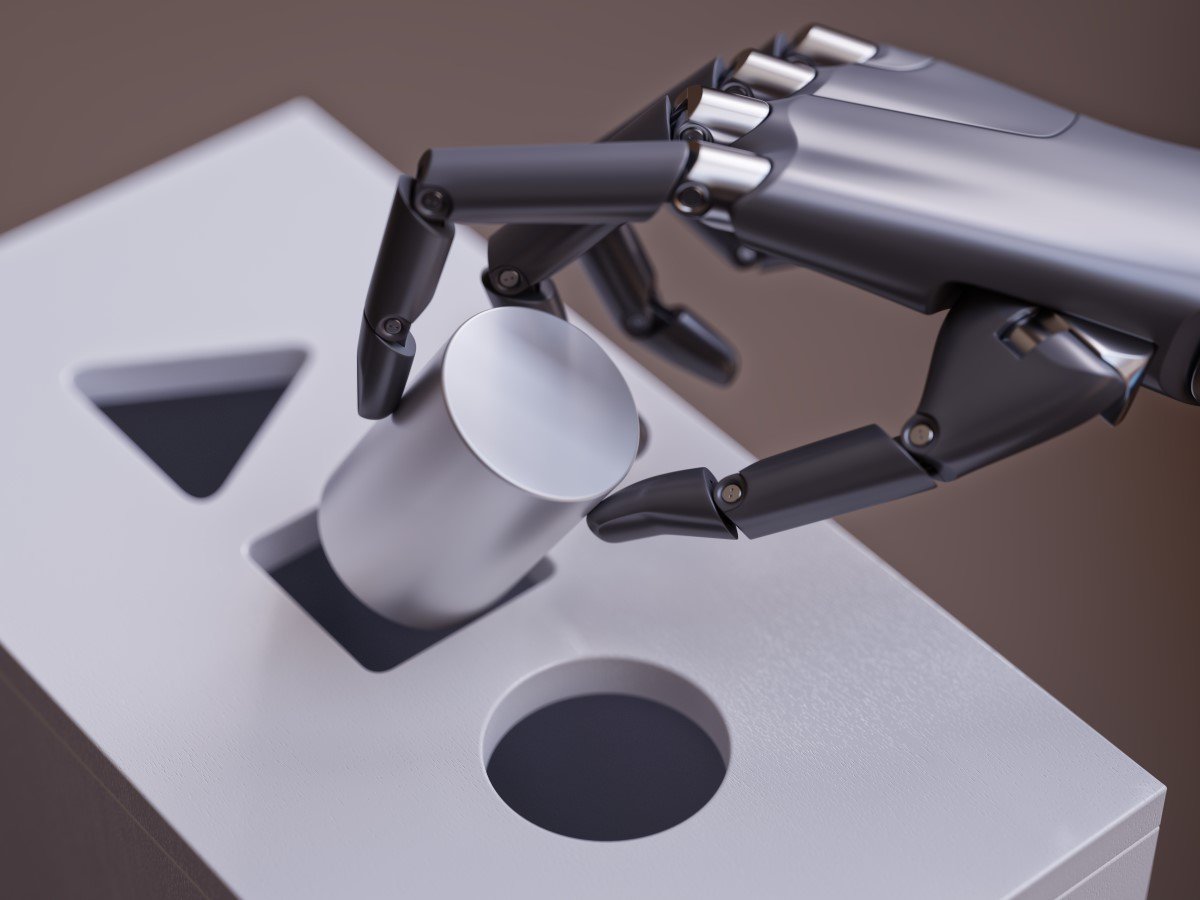

Thus, researchers at OpenAI decided to swap their words for pixels. Moreover, they want to train the same algorithm on pictures in ImageNet. ImageNet is the most popular image bank for deep learning. The algorithm has a design that would work with one-dimensional data (strings of text).

Image

Thus, they unfurled the images into a single sequence of pixels. The new model’s name is iGPT. The iGPT was still able to grasp the visual word’s two-dimensional structures. It could predict the second half of a picture in ways that a human would deem sensible, given the sequence of pixels for the first half of an image.

The results were startlingly impressive. Moreover, they demonstrate a new path for the use of unsupervised learning. It trains with unlabeled data for the development of computer vision systems. They fell out of favor for supervised learning, while early computer vision systems in the mid-2000s trialed such techniques before. However, the benefit of unsupervised learning is that it allows an artificial intelligence system to learn about the world without a human filter. Moreover, it reduces the manual labor of labeling data.

The iGPT uses the same algorithm as GPT-2. It currently shows its promising adaptability. And this goes in line with OpenAI’s ultimate ambition.