Any researcher who has interest in applying machine learning to real-world problems has received an answer like this: ‘The authors present a solution for a highly motivating and an original problem. Nevertheless, it is an application, and the significance seems scarce to the machine-learning community’.

Those words are straight from a review one journalist received for a paper he submitted to the Neural Information Processing Systems (NeurIPS) conference. NeurIPS is a top venue for machine-learning research. He has seen the refrain time and time again in reviews of papers where he and his coauthors presented a method motivated by an application, he has heard similar stories from countless others.

That made the journalist wonder: If the community feels that aiming to solve real-world high-impact problems with learning is of limited significance, then what are they trying to achieve?

The goal of artificial intelligence is to push forward the frontier of machine intelligence. A novel development usually means a new algorithm or procedure in the field of machine learning. Or, in the case of deep learning, it usually means a new network architecture. This hyper-focus on novel methods is now leading to a scourge of papers that report incremental or marginal improvements on benchmark data sets. Moreover, it exhibits flawed scholarship as researchers race to the top of the leaderboard.

Many papers that describe new applications present both high-impact results and novel concepts. Nevertheless, even a hint of the word ‘applications’ seems to spoil the paper for reviewers. Such research is marginalized at major conferences as a result. Their authors’ only real hope is to have their papers welcomed in workshops. It rarely gets the same attention from the community.

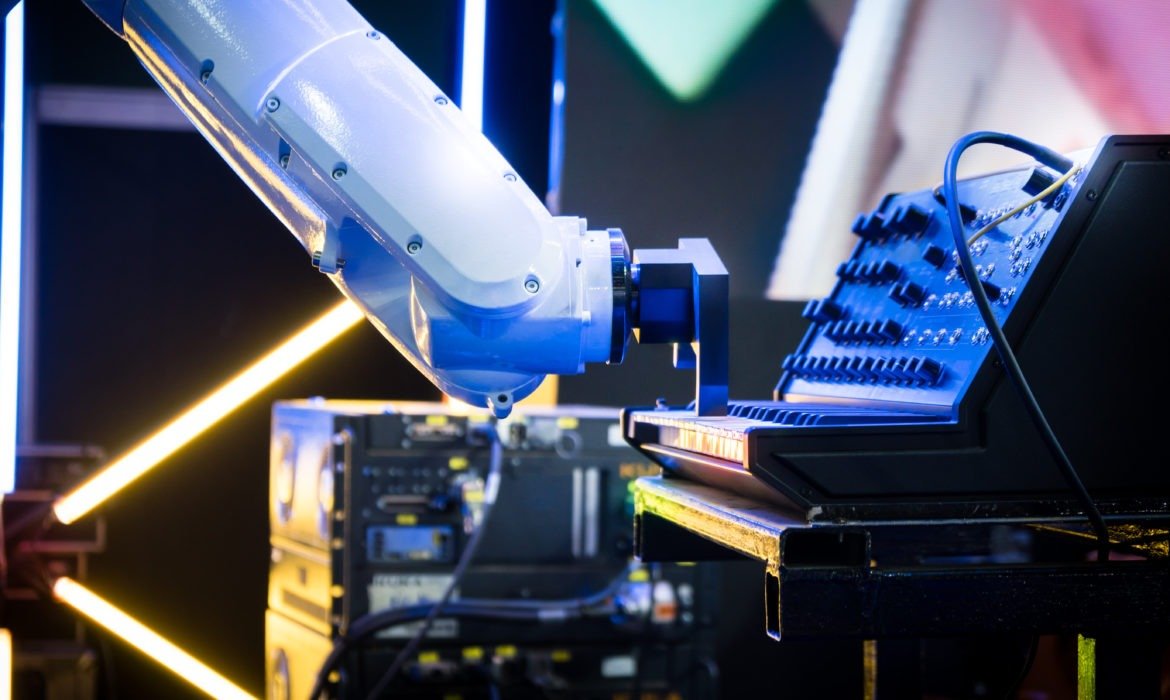

Machine Learning

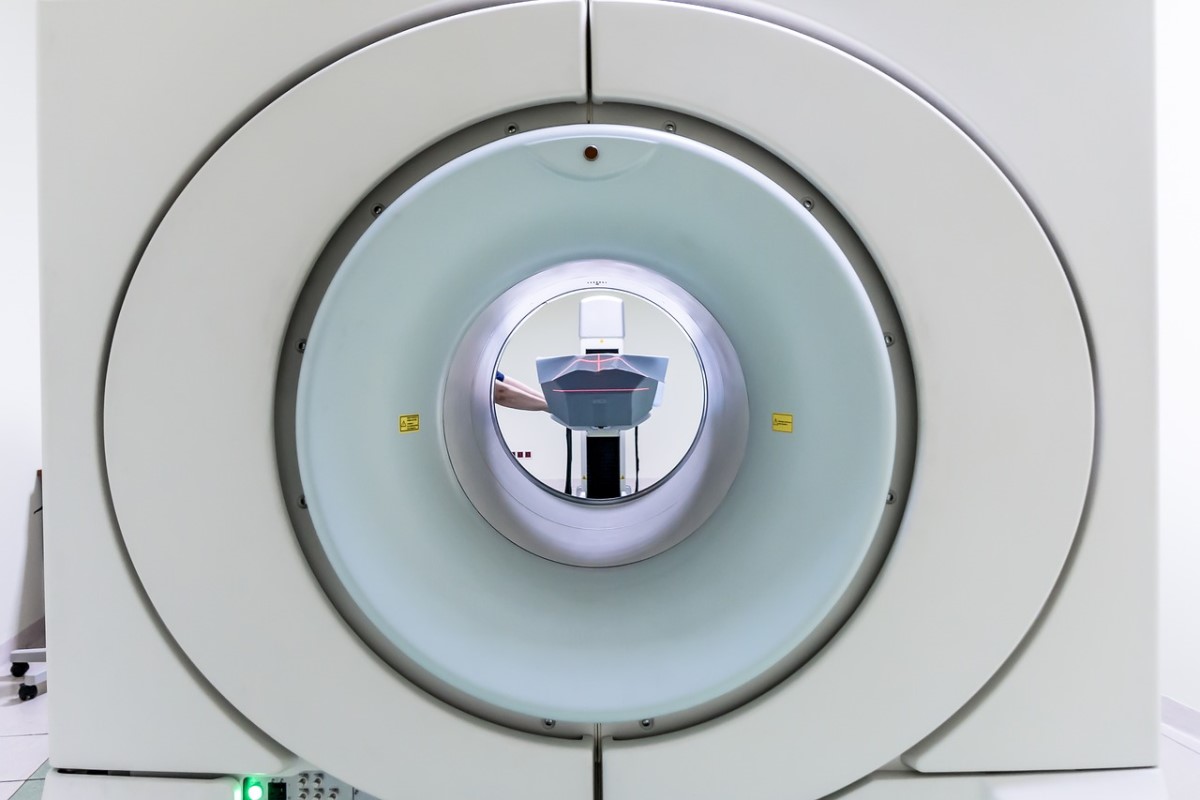

That is a problem, because machine learning holds great promise for scientific, health, agriculture discovery, and more. Machine learning produced the first image of a black hole. Machine learning made the most accurate predictions of protein structures. Thus, it is also an essential step for drug discovery. What other groundbreaking discoveries would we have made by now, if others in the field had prioritized real-world applications?

That is not a new revelation.

Kiri Wagstaff is a NASA computer scientist. She wrote a classic paper titled ‘Machine Learning that Matters.’ There, she wrote that much of current machine learning research had lost its connection to problems of import to the larger world of society and science. In the very same year, Wagstaff published her paper about a convolutional neural network called AlexNet. It won a high-profile competition for image recognition centered on the popular ImageNet data set. It led to an explosion of interest in deep learning. Unfortunately., the disconnect that she described has grown to even worse levels since then.

Marginalizing applications research has a consequence. Benchmark data sets, such as COCO or ImageNet, have been vital in advancing machine learning. They enable algorithms to be compared and train on the same data. Nonetheless, those data sets contain biases that can get to be in the resulting models. More than half of the images in ImageNet come from Great Britain and the United States.